In addition to improved performance from individual sensing technologies, including radar and light detection and ranging (LiDAR), other ongoing advances for sensor fusion are required for more complex advanced driver-assistance systems (ADAS) and to achieve the highest levels of autonomous driving (AD).

Future trends in other technologies for sensor fusion

Ongoing advances to improve sensor fusion include edge computing, 5G connectivity, and improved machine learning (ML), as well as the integration of artificial intelligence (AI) and ML techniques, will continue to provide even more advantages to future systems and address the industry’s and governments’ requirements for greater performance and safety.

In addition, global navigation satellite system (GNSS) technology provides accurate positioning, navigation, and timing (PNT) services worldwide. For ADAS, GNSS provides vehicle positioning by using satellite data to enable accurate navigation and real-time location tracking. It is the only PNT sensor that can provide an absolute rather than a relative position.

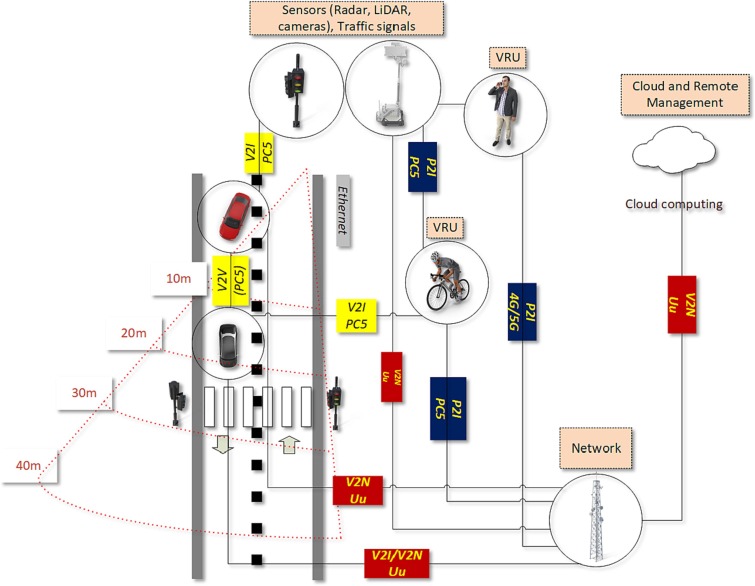

Figure 1 shows the connectivity between many aspects of the real-time operation of an AD vehicle. In addition to the cloud and Dedicated Short Range Communication (DSRC), IEEE 802.11p wireless technology, communications between other key elements include:

- Vehicle-to-Vehicle (V2V)

- Vehicle-to-Infrastructure (V2I)

- Vehicle-to-Everything (V2X)

- Vehicle to Network (V2N)

- Vulnerable Road Users (VRUs)

- Vehicle-to-Pedestrian (V2P)

- Infrastructure/Roadside Sensing Unit (RSU)

- Pedestrian-to-Infrastructure (P2I)

One of the two operating modes for Cellular Vehicle-to-Everything or C-V2X is C-V2X Direct (the PC5 interface). Network-based (Uu) communication modes enable advanced use cases such as platooning, remote driving, and extended sensors

Improved edge computing techniques

Current methods for sensor fusion in ADs often suffer from high computational costs and high latency on compute-constrained platforms. The solution is edge computing frameworks to accelerate the data fusion methods to provide real-time environmental perception, behavior decision, and navigation control, as well as the sharing of the data from all of the entities in Figure 1. Improved edge computing for sensor fusion involves the use of model compression, quantization, and event-driven processing to run complex algorithms on resource-constrained devices to implement decentralized and collaborative approaches.

Improved 5G connectivity

The integration of 5G and even 6G wireless network technology for sensor fusion will provide faster and more reliable connectivity through the use of cloud-based sensor fusion. This connectivity will enable seamless communication and data sharing between vehicle and infrastructure sensors, as well as all of the entities in Figure 1.

Integration of AI and ML

The integration of AI and machine learning with sensor fusion is one of the most promising trends for advanced technology. AI’s contribution provides the ability to identify and interpret complex sensor data patterns. Machine learning provides the ability to adapt in real-time to changes in the environment and improve decision-making even under uncertain or noisy conditions.

Combined technologies and design approaches will also provide system advances. For example, the collaboration of BMW and Qualcomm Technologies has led to an AD system built on Qualcomm’s system-on-chips (SoCs) combined with an AD software stack co-developed by both companies. The system is engineered to meet SAE Level 2+ highway requirements.

Addressing new regulations

While SAE has one of the original and fundamental specifications for ADAS and AD defining driving Levels from 1 to 5, newer regulations are appearing to provide greater control and requirements for these complex driving situations. For example, China has enacted regulations for Level 2 vehicles to meet a detection range of 70.79 meters and have a horizontal resolution below 0.2° and a vertical resolution below 0.68°.

As of Sept. 30, 2025, the United Nations Regulation on Driver Control Assistance Systems (DCAS), adopted by the United Nations Economic Commission for Europe (UNECE) World Forum for the Harmonization of Vehicle Regulations, went into effect. Regulation No. 171 defines DCAS, a subset of ADAS, as systems that assist the driver in controlling the longitudinal and lateral motion of the vehicle on a sustained basis, while not taking over the entire driving task. This classification corresponds to SAE Level 2.

As other new regulations are proposed and go into effect, the technologies discussed here and others will inevitably be a part of the ultimate solutions.

References

Edge-based computing challenges and opportunities for sensor fusion: panel review

Multi-Sensor Data Fusion Meets Edge Computing for Intelligent Surface Vehicles

Sensor Fusion In Robotics

Multi-Sensor Fusion Techniques for Improved Perception in Robotics

China Accelerates Progress on L2 Mandatory Standards, RoboSense Digital LiDAR Becomes the Safety Technology of Choice

Qualcomm and BMW Group Unveil Groundbreaking Automated Driving System with Jointly Developed Software Stack

New UN regulation paves way for deployment of driving assistance systems worldwide

Related EE World content

What are the latest advances in radar and LiDAR technologies for sensor fusion: part 1

What are the latest advances in radar and LiDAR technologies for sensor fusion: part 2

Key Considerations for integrating LiDAR and radar data for robust perception: part 1

What are the key considerations for integrating lidar and radar data for robust perception: part 2

How can designers evaluate and benchmark sensor fusion systems for autonomous machines?

How to implement multi-sensor fusion algorithms for autonomous vehicles

The power of sensor fusion

Leave a Reply

You must be logged in to post a comment.